#AI

#DevTool

#B2B

(Apprenticeship, Launched in the internal platform)

I designed an AI-powered SQL IDE from 0-to-1 that reduced query execution time by 86% (255s → 35s) , democratizing database optimization for both technical and non-technical users at Google.

Product , Database, Engineer,

Machine Learning Team

Product Design

UX Research

CONTRIBUTION

While this was a 0-to-1 product, I wore multiple hats across design, research, and development, collaborating across 4 teams to ship a complete solution in 4 months.

0-to-1 Product Design

Designed the complete user experience from concept to launch, defining interaction patterns for AI-powered query optimization

User Research @Google Madison office

Conducted 15+ user interviews and usability test at Google Madison, talking to analysts, DBAs, and engineers in their actual workspace

Cross-Functional Collaboration

Worked closely with PM, Database team, backend engineers, ML engineers and Google mentor

Agile Environment

I followed a 1-week Agile sprint cycle, using JIRA to manage tasks and track progress

SOME NUMBERS...

Working alongside Google's world-class team raised my bar for what great product design looks like

This wasn't just an apprenticeship — it was a masterclass in collaboration. I saw how design, engineering, and product unite to solve complex problems, and left with a deeper understanding of what great product design requires.

86%

reduction in query time

4.9/5.0

AI trust score

98%

satisfaction rate

15+

usability tests

33

prototypes

2

presentations to the Stakeholder and Head form HQ

PROBLEM & CHALLENGE

The Security Paradox

AI only gave formulas. Security blocked plugins. Every query became a research project

DEFINE CHALLENGE

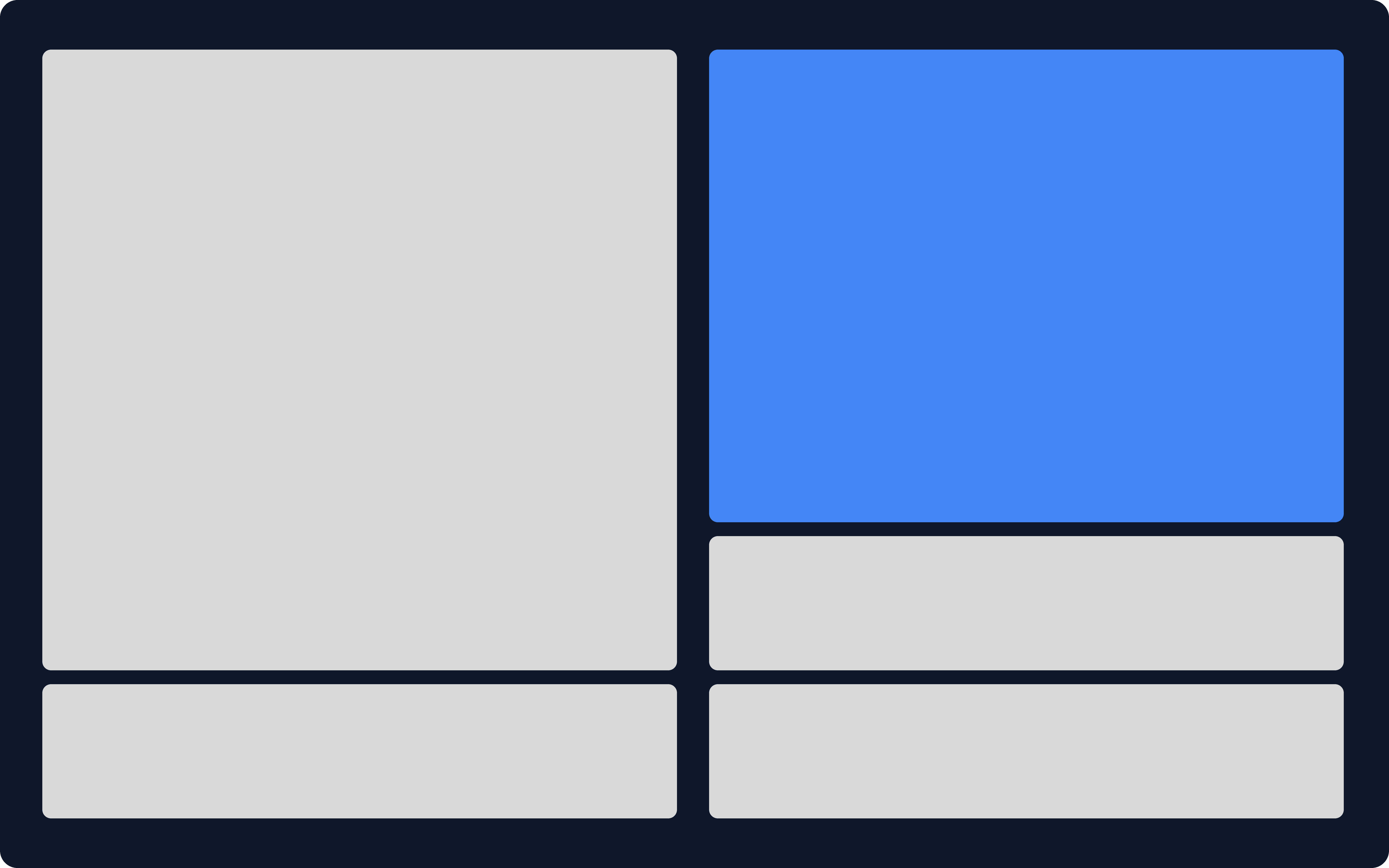

LAYOUT ITERATION

One side or two? The answer changed everything

Before

Option A: Single-Panel Replace

Early designs placed the AI-rewritten query directly in the editor — simple, clean, but missing something critical. When we tested with users, the feedback was unanimous: "I don't know what changed. I don't know if I can trust this."

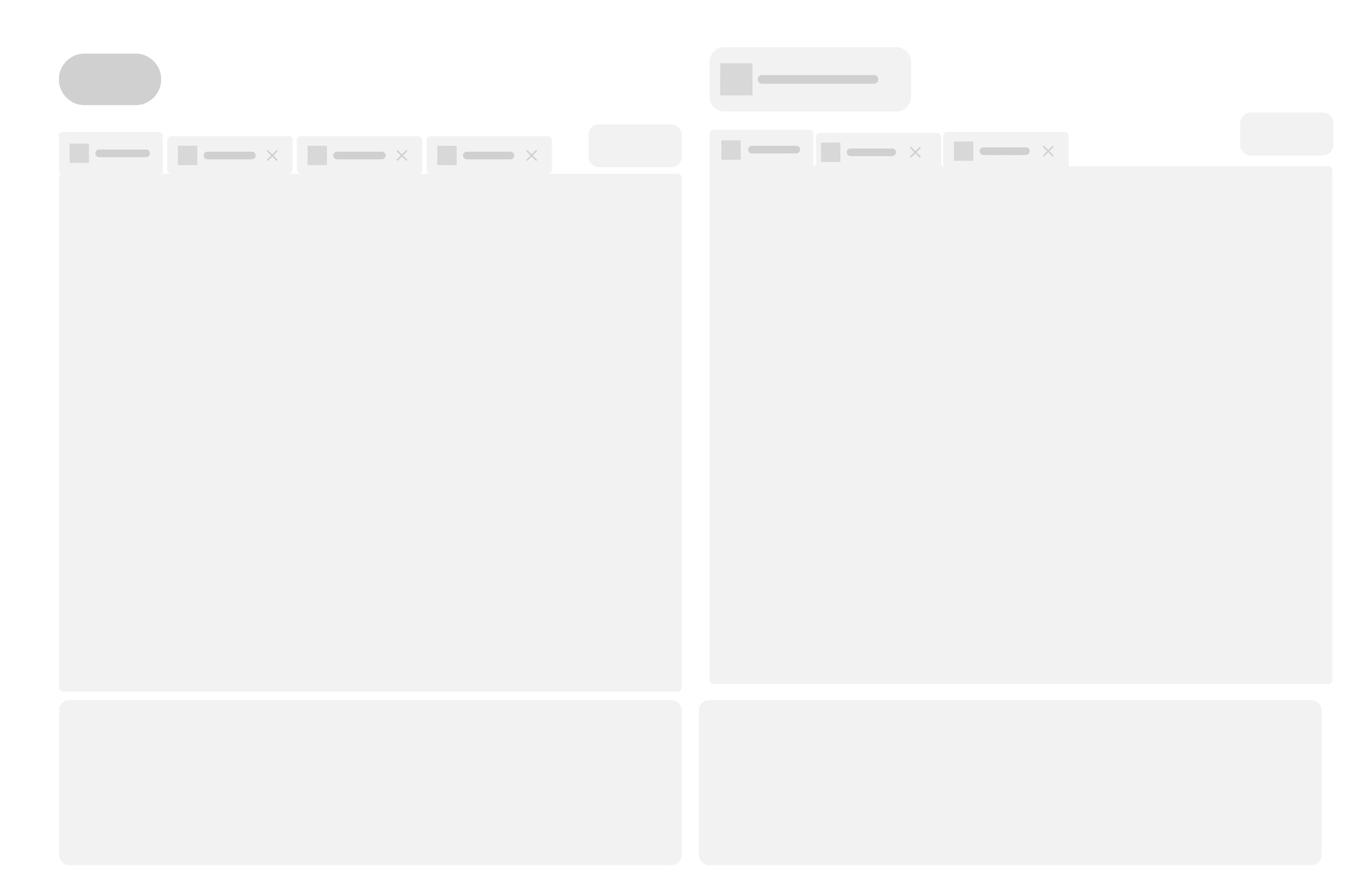

After

Option B: Side-by-Side Comparison

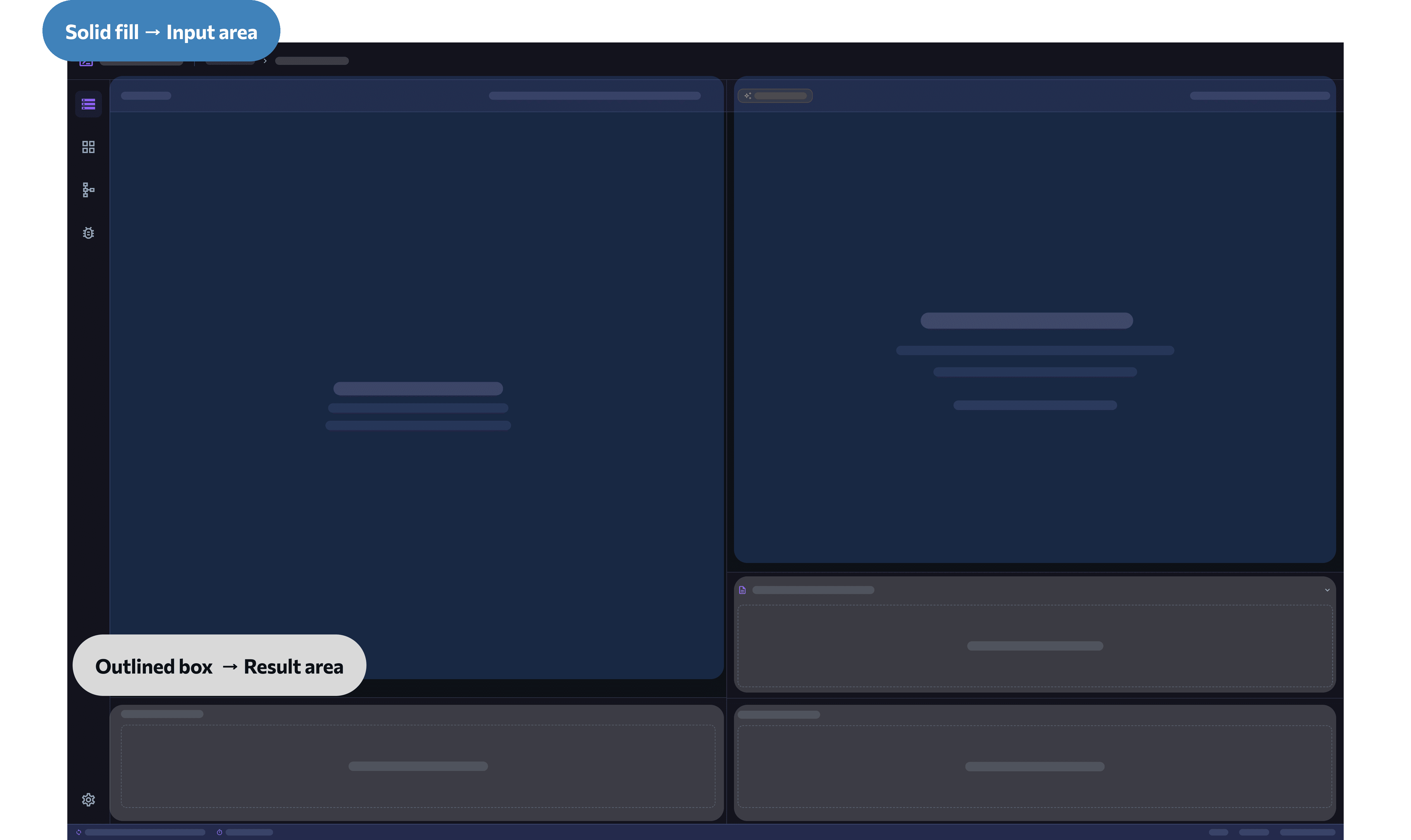

DEFINE LAYOUT

One canvas, three modes — adapting to where you are in your workflow

Standard

The default layout, offering a balanced view of both the original query and AI suggestion before making any changes

User Input + Result

Optimized for writing and testing your own query, with results surfaced directly alongside for immediate validation

AI Rewrite + Result

Optimized for reviewing the AI-optimized query and validating performance improvements before accepting the change

Solid Means Write.

Outlined Means Read.

Solid fill → Input area

Where you write. The solid background signals an active, editable space.

Outlined box → Result area

Where you read. The outlined treatment signals output — results and AI responses surface here.

Clearer Guidance on Landing Page

Early versions dropped users into a blank IDE with no direction — the empty state created friction before they even typed a single character. I redesigned the landing state to guide users through the workflow from the first second

AI Feature Placement

Button: "AI Rewrite" — clear action verb

Hover: "See how AI would restructure your query for better performance

100% CTR with zero confusion in testing

HOLD ON FOR A SECOND...

My engineering past made me a more complete designer — and a better collaborator

My frontend background taught me that error states are never "engineering's problem" — they're a design decision.

So before development started, I sat down with our frontend engineer and designed every failure point in advance.

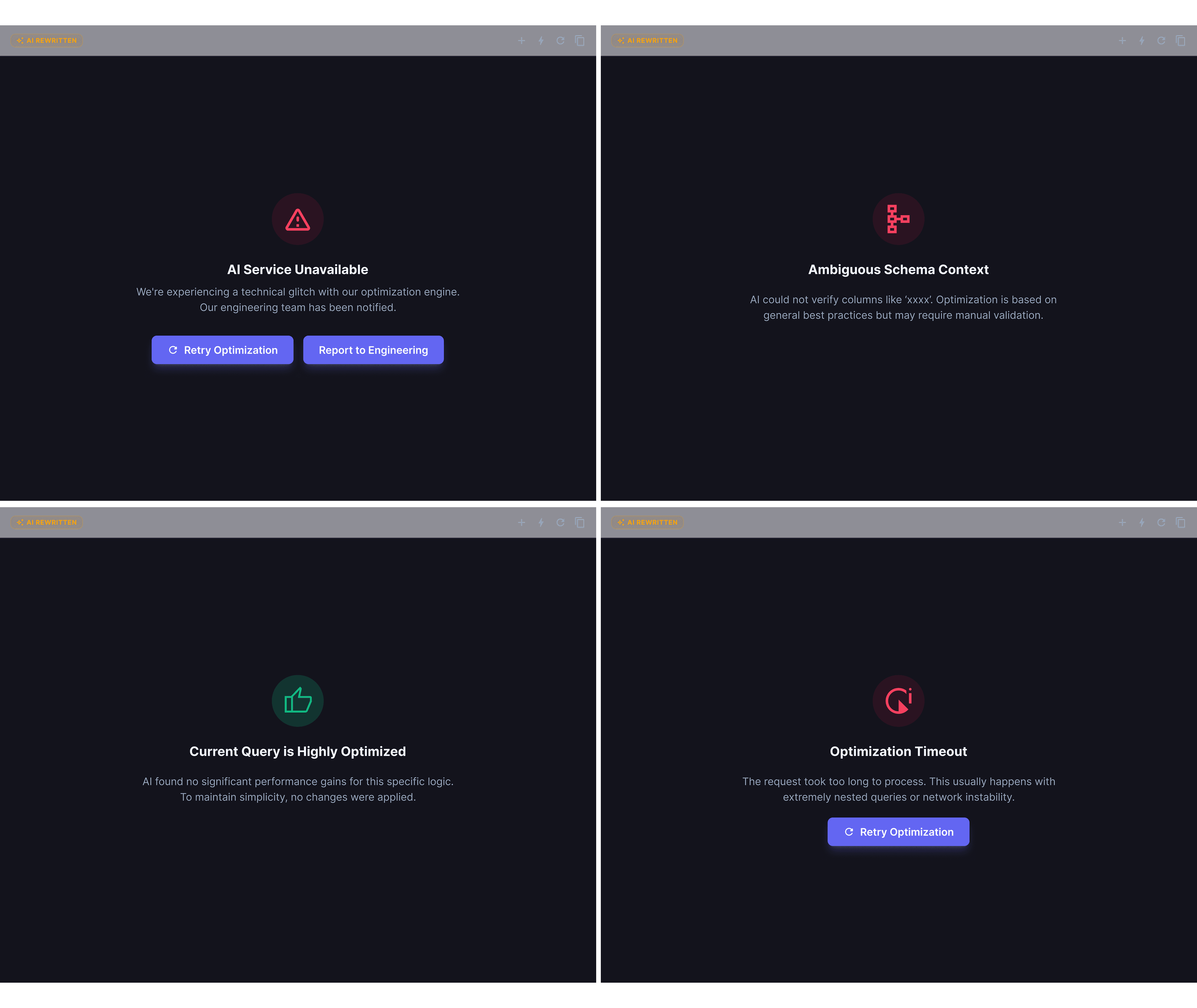

Anticipating Edge Cases

4 Error States

to Guide User Next Step

AI Service Unavailable

Ambiguous Schema Context

Current Query is Highly Optimized

Optimization Timeout

FINAL DESIGN

The Complete Flow: From Input to Insight

👀 How it works

Input → Rewrite → Compare → Execute → Learn

Users input a query, click "AI Rewrite," and instantly see a side-by-side comparison with performance metrics and highlighted changes. They execute both versions and compare real outcomes before deciding which to keep.

Highlight 1: Difference Highlighting

Learning through comparison, not just optimization

Inline highlights + explanation panel show what changed and why, turning optimization into education

💡 UX Insight: Show the logic, not just the result. Users who understand the change write better queries on their own

Highlight 2: Outcome Comparison

Proof over promises

Side-by-side execution results prove the improvement with real data, not predictions

💡 UX Insight: Transparency = trust. Seeing measurable impact closes the credibility loop

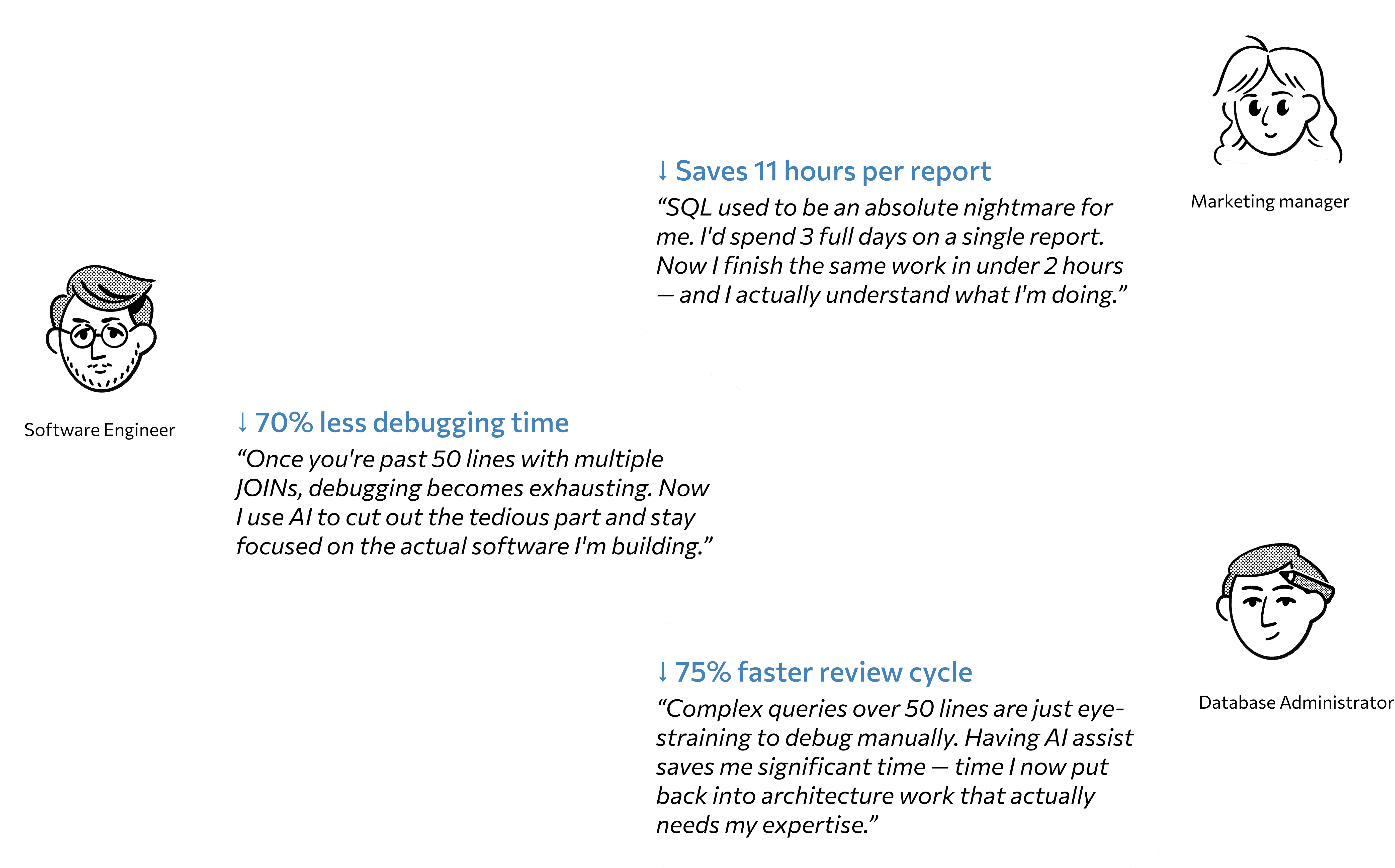

USER'S SUCCESS METRICS

Real people, real time saved

What I Learned

✅

Transparency builds trust faster than automation builds efficiency

Users adopted AI when they could see what changed and why, not when it worked like magic.

✅

The best AI tools teach, not replace

Non-technical users didn't want the AI to do their work, they wanted to get better at SQL themselves.

✅

Design error states before success states

My frontend background taught me: how you handle failure shapes user trust more than how you design the happy path.